Opportunities in GenAI model deployment

The Problem

Organizations across a range of sectors are racing to adopt generative AI. Investors poured $14.1B into generative AI startups in 2023, and Bloomberg projects that generative AI will be a $1.3T industry by 2032.

Two obstacles stand in the way of widespread AI model deployment: hardware availability and implementation complexity.

On the hardware front, demand for top-performing GPUs is outstripping supply. Nvidia sold out of several lines of GPUs in 2023, and AI firms must compete for top-performing chips that allow them to deploy state-of-the-art models. GPU cost can also present a challenge for AI startups. Nvidia’s flagship N100 chip costs $10,000, and due to the fast pace of GPU advancement, chips become obsolete quickly.

On the implementation front, model deployment still requires significant ML engineering expertise. Teams need substantial expertise in order to: provision modern hardware, develop data pipelines, train models, deploy models, and monitor model performance.

Despite widespread interest in generative AI, many tech firms struggle to deploy LLMs/stable diffusion models due to implementation complexity and barriers to hardware access.

The Solution Space

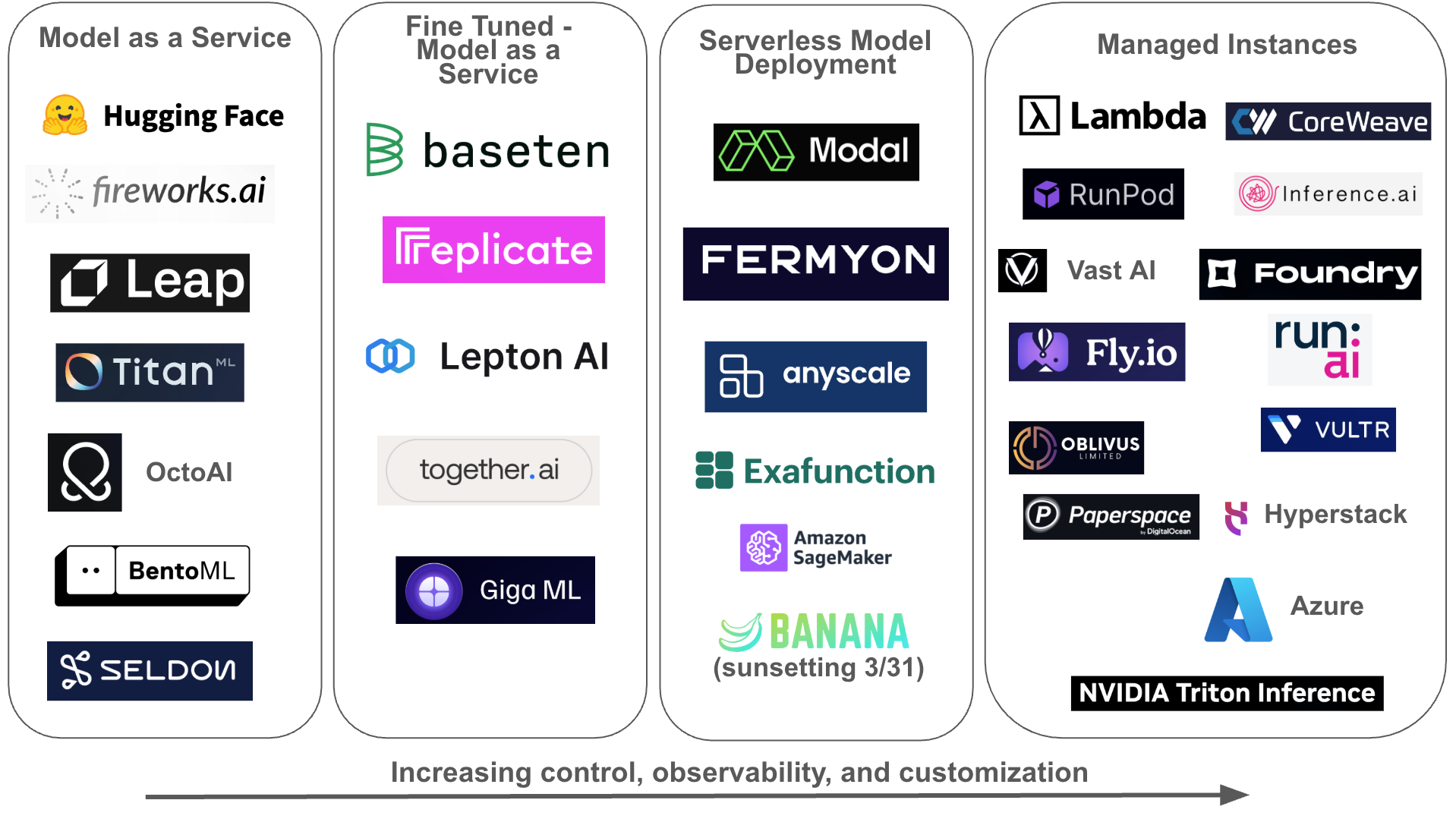

Dozens of startups are working to make model deployment easier, cheaper, and less risky. Some emphasize simplicity and speed, while others emphasize control and customization. Solutions generally fall into four buckets, ordered below from “simplest” to “most customizable”:

- Model as a Service (MaaS). Deliver existing open-source models through open APIs.

- Fine-Tuned MaaS. Enable customers to fine tune open-source models with proprietarydatasets, then deploy those models with minimal code/configuration.

- Serverless Model Deployment. Enable customers to deploy custom models throughserverless functions, abstracting away hardware and software infrastructure complexity.

- Managed Instances. Enable customers to reserve single or multi-tenant GPU instanceson demand, providing a high degree of control over hardware and software infrastructure.

Some model deployment companies focus on a single bucket. Others play in several buckets, providing a range of products. The following market map lists each big player in model deployment in its primary bucket:

Within these buckets, customers value the following characteristics in an ML deployment product:

- Developer experience. How quickly can a customer deploy a model — hours? days? weeks?

- Perceived reliability and performance. What is the latency of inference calls? What is uptime of managed instances? Is telemetry built in?

- Access to complementary products. Can a customer leverage the platform for use cases other than model inference, such as standing up general-purpose APIs?

- Open-source integration, which:

- Gives customers access to the latest in AI technology/models.

- Empowers customers to improve the tools.

- Gives customers confidence in project longevity.

- Cost

Opportunities and Challenges

The 2010s were a boom time for cloud infrastructure firms. As business exploded for Web 2.0 startups like Doordash, Uber, AirBnB, and more, cloud services enabled these companies to scale quickly and performantly. A handful of infrastructure providers won big. AWS generated $91B in 2023.

Just as AWS solved problems for Web 2.0 startups, “model deployment” firms are filling a need for the latest wave of AI startups. The question is: which firms will be able to capture that value and build a profitable business?

Today’s model hosting startups will face significant challenges around competition and supplier power.

Let’s start with competition. Competitors in this category are extraordinarily well-funded. Dominant cloud infrastructure providers like AWS, GCS, and Azure compete across all four “model deployment” buckets, and have a leg up through existing customer relationships. Startups in the space are also well capitalized. Lambda Labs raised a $320M Series C in February, Together.ai raised a $102.5M Series A in November, and CoreWeave recently announced a minority investment of $642M. War chests this large are leading to tough competition on price: earlier this month, seed startup Banana announced it would sunset its serverless GPU product.

Supplier power will also hinder firms’ profitability. Nvidia commands 87% of the dGPU market, with AMD and Intel following distantly at 10% and 3%. Nvidia’s market power will allow it to raise prices over time, cutting into margins for “model deployment” service providers. Because Nvidia is also a cloud services provider – its Triton Inference Servers are popular for model deployment – it will be able to squeeze startups as a competitor and as a supplier. Some startups have attempted to address supply uncertainty through partnerships, joining the NVIDIA Partner Network. However, looming regulation puts the benefit of these partnerships into question – the FTC recently launched an inquiry into AI partnerships.

Due to tough competition and supplier power, startups that compete on simple “GPU-as-a- Service” products will struggle.

Opportunities

That being said, substantial opportunities do exist in a few subcategories of model deployment. These include:

AI observability tools. Datadog set the standard for Web 2.0 telemetry tooling. Observability is becoming even more important in AI applications, where app behavior can be more unpredictable. Helicone, which offers an open-source observability platform for generative AI, is a startup to watch. Similarly, model hosting tools like Modal and Seldon which offer built-in observability features have a competitive advantage.

Inference at the edge. Performance is important for AI application developers, and bringing models closer to end users will lead to snappier experiences. Fly.io, which provides inference servers for GPUs in 38 countries, has a head start in this niche. A trend in Small Language Models (SLM) development is also worth tracking. SLMs like Vicuna can be run on end-user devices and do not require internet connectivity. As the quality of SLMs improves, look out for tools which make deployment to mobile devices easy.

Social Marketplaces. The pace of AI model development will continue to accelerate as more developers participate. Startups like HuggingFace and Replicate -- which have built a vast developer community -- will benefit from network effects that draw developers into their tools.

While big tech companies have obvious advantages in model deployment services – access to hardware, complementary products, and large existing customer bases – they by no means own the space.

Conclusion

Model deployment is a hotly contested category. Although some venture firms are investing hundreds of millions on general-purpose GPU clouds, some of the best opportunities lie in niches like observability.